This page covers the Python SDK path (Essence on CPU, self-hosted). If you’re building somewhere else, jump straight to:Documentation Index

Fetch the complete documentation index at: https://docs.bithuman.ai/llms.txt

Use this file to discover all available pages before exploring further.

- Mac / iPad / iPhone app → Swift SDK quickstart (10 min, on-device)

- No code, just try it on a Mac → bithuman-cli (

brew install bithuman-cli) - Web app with LiveKit → Cloud Plugin guide (cloud-hosted avatar)

- Backend, any language → REST API overview (

curlagainstapi.bithuman.ai)

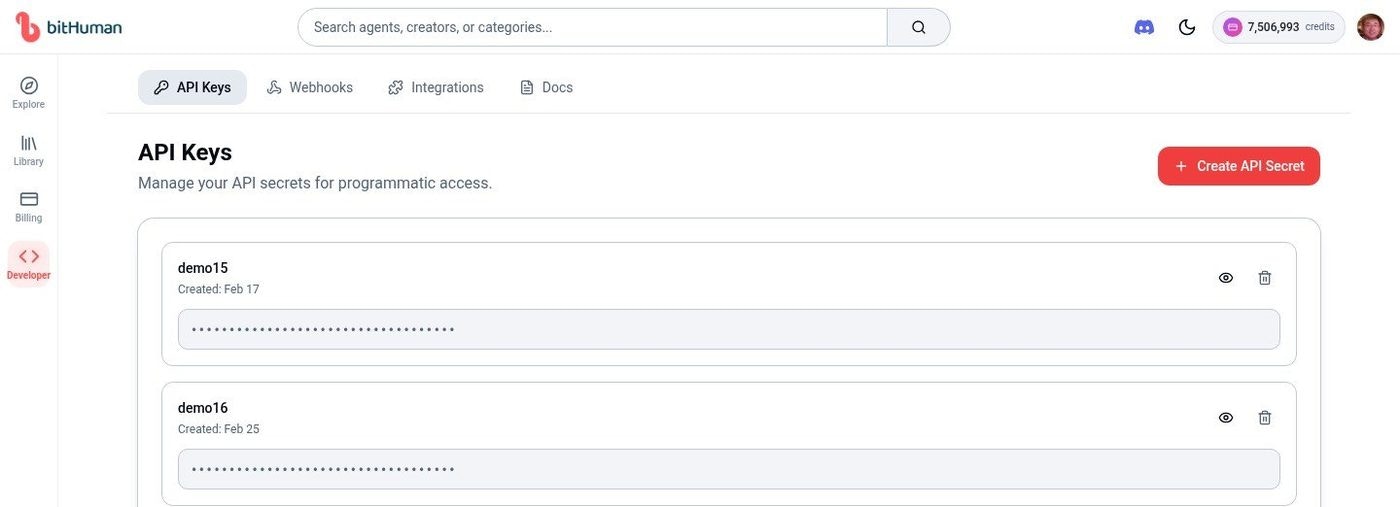

1. Get Credentials

Sign up

Create an account at www.bithuman.ai

Copy your API Secret

Go to Developer → API Keys and copy your API Secret.

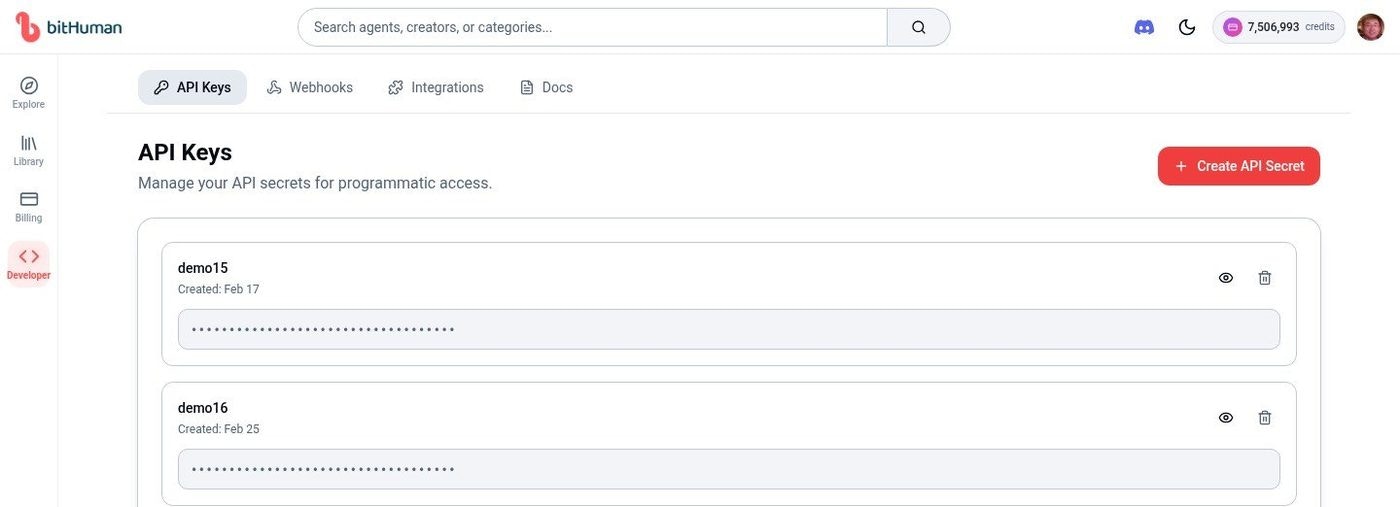

Download an avatar model

Download an avatar model (

.imx file) from the Explore page — click the ⋮ menu on any agent and select Download.

2. Install

The SDK includes

opencv-python-headless automatically. Do not install opencv-python (full) separately — it conflicts with PyAV and causes FFmpeg warnings on macOS.3. Run Your First Avatar

You need a.wav audio file to drive the avatar. A sample speech.wav is included in each

example directory, or generate your own with any TTS service.

Option A — CLI (fastest, no coding)

demo.mp4 to see your avatar talking.

Don’t have a WAV yet? Grab the bundled sample in one line:

curl -O https://raw.githubusercontent.com/bithuman-product/bithuman-examples/main/essence-selfhosted/speech.wavOption B — Python

Key Concepts

| Concept | Description |

|---|---|

| Runtime | AsyncBithuman instance that processes audio into video |

| push_audio | Feed audio bytes — avatar lip-syncs in real-time |

| flush | Signals end of audio input |

| run() | Async generator that yields frames at 25 FPS |

| Frame | Contains .bgr_image (numpy), .audio_chunk, .end_of_speech |

Troubleshooting

ModuleNotFoundError: No module named 'bithuman'

ModuleNotFoundError: No module named 'bithuman'

The SDK is not installed. Run:Make sure you’re using the correct Python environment (virtualenv, conda, etc.).

Authentication failed / 401 error

Authentication failed / 401 error

Your API secret is invalid or missing. Check:

- You copied the full secret from Developer → API Keys

- The

api_secretparameter orBITHUMAN_API_SECRETenv var is set correctly - Your account is active with available credits

Avatar shows but no lip movement

Avatar shows but no lip movement

The avatar needs audio input to animate:

- Ensure you’re calling

push_audio()with valid audio data - Call

flush()after pushing all audio - Check that the audio is 16-bit PCM format (use

float32_to_int16()helper) - Verify audio sample rate matches the file (typically 16000 or 44100)

Slow startup (~20 seconds)

Slow startup (~20 seconds)

This is normal for the first session — the

.imx model takes time to load and initialize. Subsequent sessions in the same process start instantly.To reduce perceived latency, keep the runtime alive between sessions instead of recreating it.FileNotFoundError: avatar.imx not found

FileNotFoundError: avatar.imx not found

The model file path is wrong. Check:

- The

.imxfile exists at the path you specified - Use an absolute path if running from a different directory

- Download a model from the Explore page if you don’t have one

Next Steps

Audio Clip

Play audio file through avatar (5 min)

Live Microphone

Real-time mic input (10 min)

AI Conversation

OpenAI voice chat (15 min)

Guides

- Prompt Guide — Master the CO-STAR framework for avatar personality

- Media Guide — Upload voice, image, and video assets

- Animal Mode — Create animal avatars

System Requirements

- Python 3.9 – 3.14

- Essence (CPU): Linux (x86_64 / ARM64), macOS 13+ (Intel or Apple Silicon), or Windows 10+. 1–2 CPU cores, 4 GB RAM typical.

- Expression on-device: macOS 14+ on Apple Silicon M3 or later, 16 GB RAM. Elsewhere, use the self-hosted GPU deployment on Linux + NVIDIA.